Highlights

- Fully guided AI training pipeline that takes you from raw data to a deployed model with intelligent automation at every step

- Smart data-aware visualizations that analyze your dataset and generate charts tailored to your actual columns and distributions

- Interactive decision points where you stay in control of key choices like label selection, feature engineering, and deployment

- Automated feature engineering that creates new features from your existing data to improve model performance

- Built-in chat interface for querying your data, interpreting model results, and getting actionable predictions in natural language

- One-click deployment, monitoring, and reporting to take your model from training to production in a single workflow

What's New

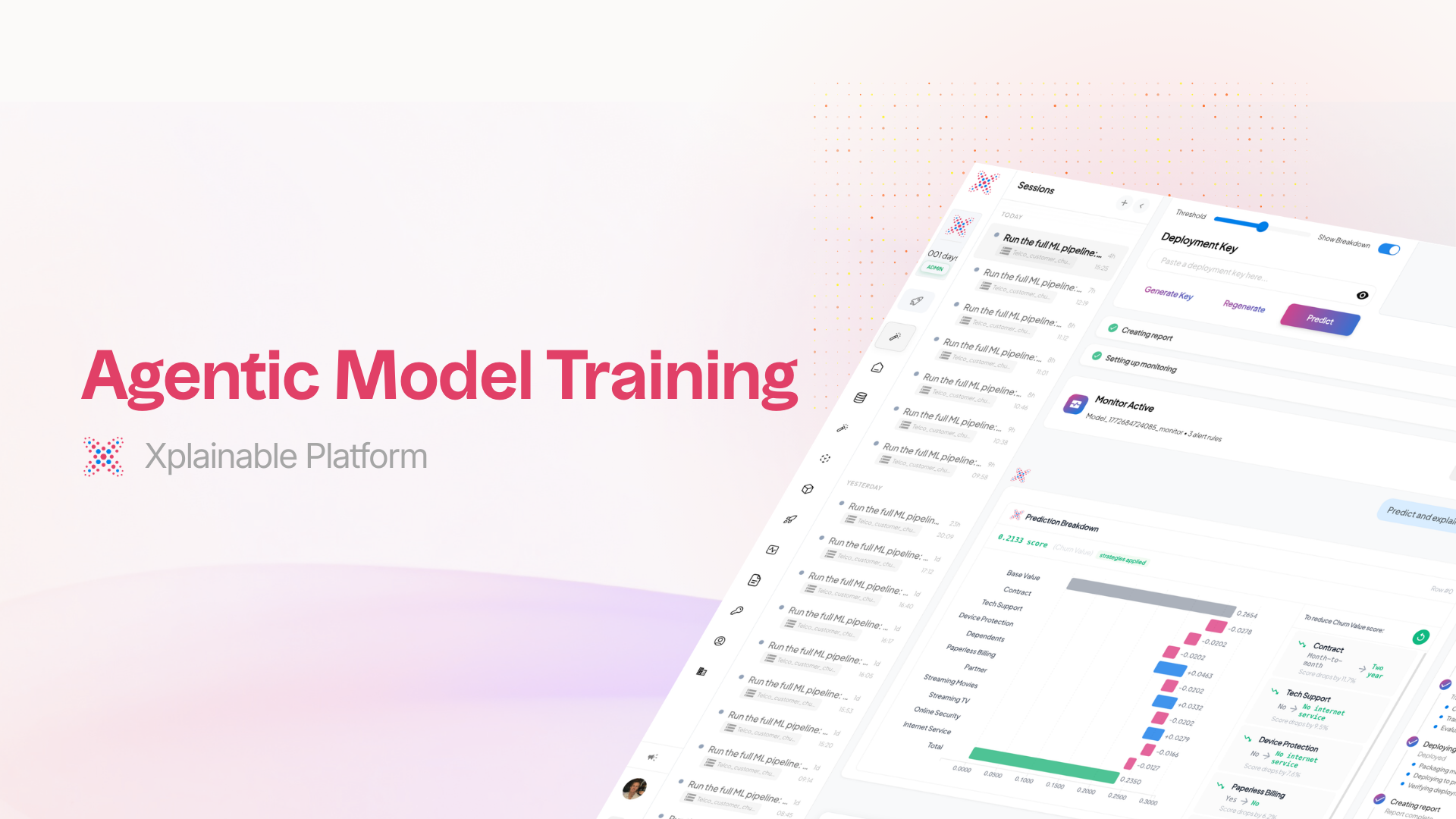

Agentic Auto-Train is a fundamentally new way to build machine learning models on xplainable. Rather than manually configuring each step of the pipeline, an AI assistant now guides you through the entire process from data upload to deployment. The system analyzes your dataset, makes intelligent recommendations, and adapts to your decisions at every stage.

Upload your data and the assistant immediately gets to work. It scans your dataset for quality issues, identifies column types, checks for missing values, and surfaces a health summary so you understand exactly what you're working with before training begins.

Intelligent Label Selection

The system analyzes every column in your dataset and recommends the most suitable prediction targets, ranked by confidence. Each recommendation comes with a clear explanation of why that column is a good candidate, along with key statistics like unique values, null rates, and class balance. You choose the label that fits your goal, and the pipeline adapts accordingly.

Data-Aware Visualizations

Instead of showing generic placeholder charts, the system now examines your dataset's structure and generates visualizations that are specific to your data. It identifies the most meaningful distributions, correlations, and feature relationships, then creates tailored charts that reference your actual column names and data characteristics. Each visualization comes with a title explaining what insight it's exploring and why it matters.

Automated Feature Engineering

The AI assistant reviews your dataset and generates new engineered features designed to improve model performance. It creates transformation code for each feature, explains the rationale behind each one, and presents them for your review. You can approve individual features, skip ones that don't make sense for your use case, or let the system apply its full set of recommendations.

Interactive Decision Points

Throughout the pipeline, you're presented with clear decision cards at every critical juncture. Whether it's selecting a prediction target, approving engineered features, reviewing preprocessing strategies, or confirming deployment settings, you always have visibility into what the AI is recommending and the ability to adjust course. The pipeline pauses at each decision point and waits for your input before continuing.

Comprehensive Model Overview

Once training is complete, you get a detailed model profile that goes beyond simple accuracy numbers. The overview includes performance metrics across multiple evaluation criteria, a breakdown of the top contributing features, and key insights about what the model learned from your data. You can see at a glance which features matter most and how the model is making its predictions.

Prediction Testing with Waterfall Breakdown

Test your model directly in the interface by entering values for each feature. The system returns a prediction along with a detailed waterfall chart showing exactly how each feature contributed to the result, both positively and negatively. Alongside the prediction, you get actionable recommendations highlighting which features have the most room for improvement and what direction would shift the outcome.

Chat with Your Data and Model

A built-in conversational interface lets you interact with your data and model using natural language. Ask questions about your dataset and get instant query results. Request new visualizations and they're generated on the fly. Ask the model to interpret its own behaviour or run what-if predictions. Responses stream in real time with rich formatting, inline charts, and structured data tables.

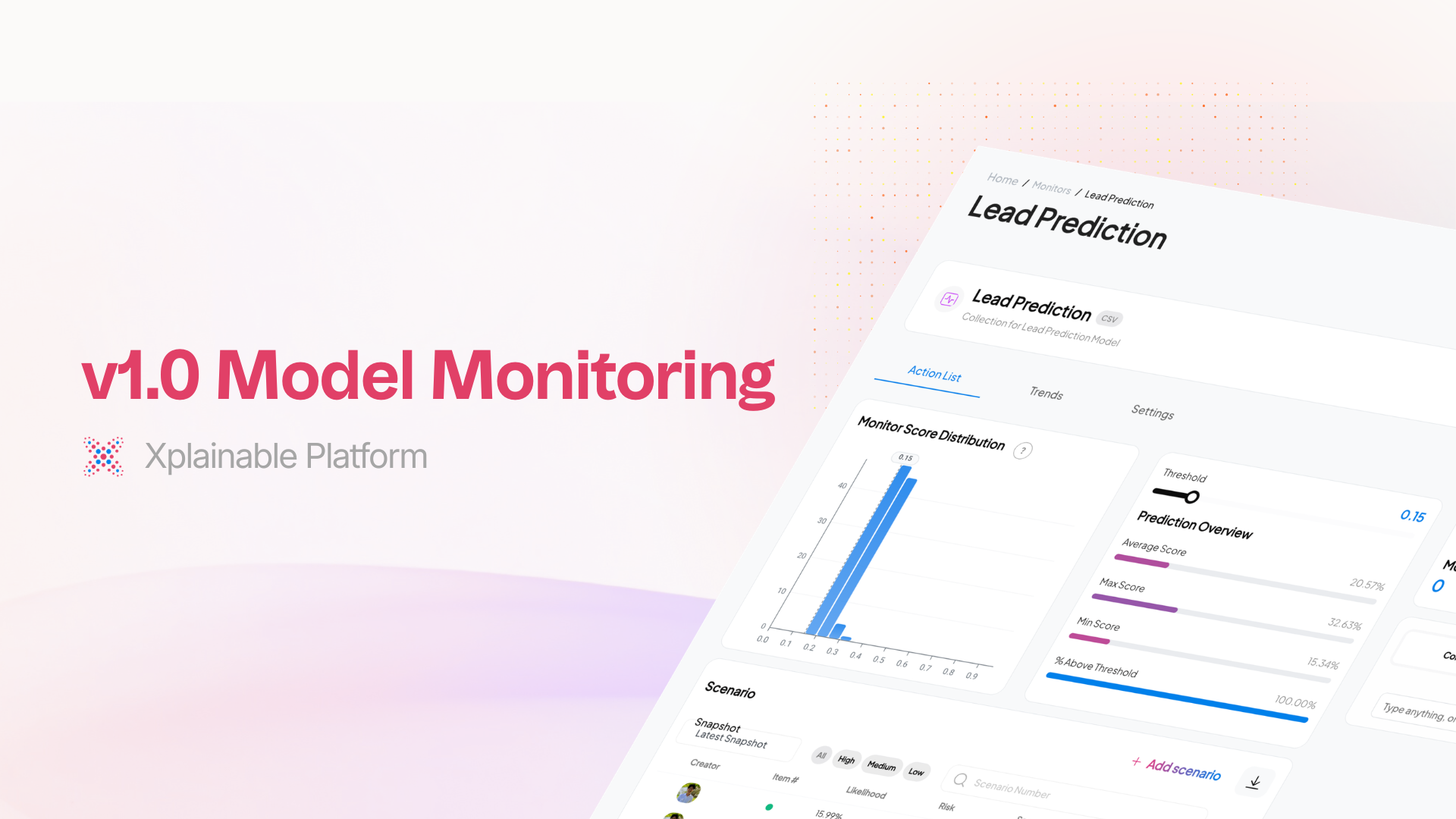

Deploy, Monitor, and Report

When you're satisfied with your model, deploy it with a single click directly from the training workflow. Set up automated monitoring to track model performance over time and catch drift early. Generate detailed reports summarizing your model's capabilities, training process, and key findings. The entire journey from raw data to production-ready model happens within one continuous, guided experience.

How It Works

- Upload your dataset and the AI assistant begins analyzing it immediately

- Review data health metrics and quality summaries

- Explore auto-generated visualizations tailored to your data

- Select a prediction target from ranked recommendations

- Approve engineered features and preprocessing strategies

- Train your model with optimized settings

- Evaluate performance with detailed metrics and feature breakdowns

- Chat with your model to test predictions and explore insights

- Deploy to production and set up monitoring, all in one flow