Building an Explainable Lead Scoring Model for SaaS: Why Your Sales Team Will Thank You

Transform your lead prioritization from guesswork to transparent, actionable insights that your sales team will actually trust and use.

Your AI says this lead is a 92. Another is a 47. Your sales rep looks at both, shrugs, and works the list alphabetically anyway.

This is not a training problem. It is a trust problem.

85% of B2B buyers choose from their day-one shortlist. If your team is not prioritizing the right leads immediately, you are already invisible.

When lead scores come from a black box, reps treat them as suggestions at best, noise at worst. The fix is not better predictions. It is transparent predictions.

When a rep sees "Score 92: C-level title + visited pricing page + competitor tech stack detected," that is not a number. It is a playbook.

The Lead Scoring Gap: By the Numbers

Before diving into solutions, let us look at the current state of lead scoring in B2B SaaS:

The gap between average and top performers is not about having more data. It is about having better interpretations of that data, and trusting those interpretations enough to act on them immediately.

The Speed-to-Lead Problem

Research confirms what sales leaders have long suspected: timing is everything.

- Responding within 5 minutes makes you 21x more likely to qualify a lead versus waiting 30 minutes

- Companies that follow up within 1 hour see 53% conversion rates versus just 17% for 24-hour follow-ups

- Yet only 27% of leads sent to sales by marketing are actually qualified for sales engagement

This creates a cruel dilemma: move fast and waste time on unqualified leads, or qualify carefully and miss the window entirely. Explainable lead scoring solves this by giving reps instant clarity on why a lead is worth pursuing.

The Problem with Black-Box Lead Scoring

Most AI lead scoring tools work the same way: ingest CRM data, train a model, output a number between 0 and 100. The accuracy might be impressive. The adoption? Not so much.

1. Reps do not trust what they do not understand

When a model says "high priority" but cannot explain why, reps fall back on gut instinct.

People do not easily trust machine recommendations they do not thoroughly understand, especially when their commission is on the line.

A 2025 industry study found that while 70% of high-growth B2B companies have adopted predictive lead scoring, adoption at the rep level remains inconsistent. The reason? Reps cannot explain the score to themselves, let alone to a prospect.

2. Marketing cannot optimize what they cannot see

If your highest-scoring leads all came from a specific campaign, you would want to know that. Black-box models bury these insights inside millions of parameters.

Consider this: SEO-generated leads show 51% MQL-to-SQL conversion, significantly outperforming other channels. But if your scoring model treats all traffic sources equally, you will never surface this insight.

3. Compliance is coming

The EU AI Act requires explainability mechanisms for AI-driven decisions, with enforcement beginning in 2026. If your scoring model cannot explain itself, it becomes a liability.

What Makes SaaS Lead Scoring Different

B2B SaaS has unique characteristics that make explainability even more critical:

B2B SaaS achieves a 40% MQL-to-SQL conversion rate, far exceeding the 13% cross-industry average. But this only happens when qualification is precise and teams trust the process.

The Three-Level Explanation Advantage

Black-box models give you one thing: a score. Explainable models give you three:

- Global explanations: What patterns drive conversions across all leads?

- Regional explanations: How do specific segments (enterprise vs. SMB, industry verticals) behave differently?

- Local explanations: Why did this specific lead get this specific score?

This layered approach means marketing can optimize campaigns (global), sales managers can coach effectively (regional), and reps can prioritize confidently (local).

What Explainable Lead Scoring Looks Like

Explainable AI is not about dumbing down your model. It is about building transparency into the architecture from the start.

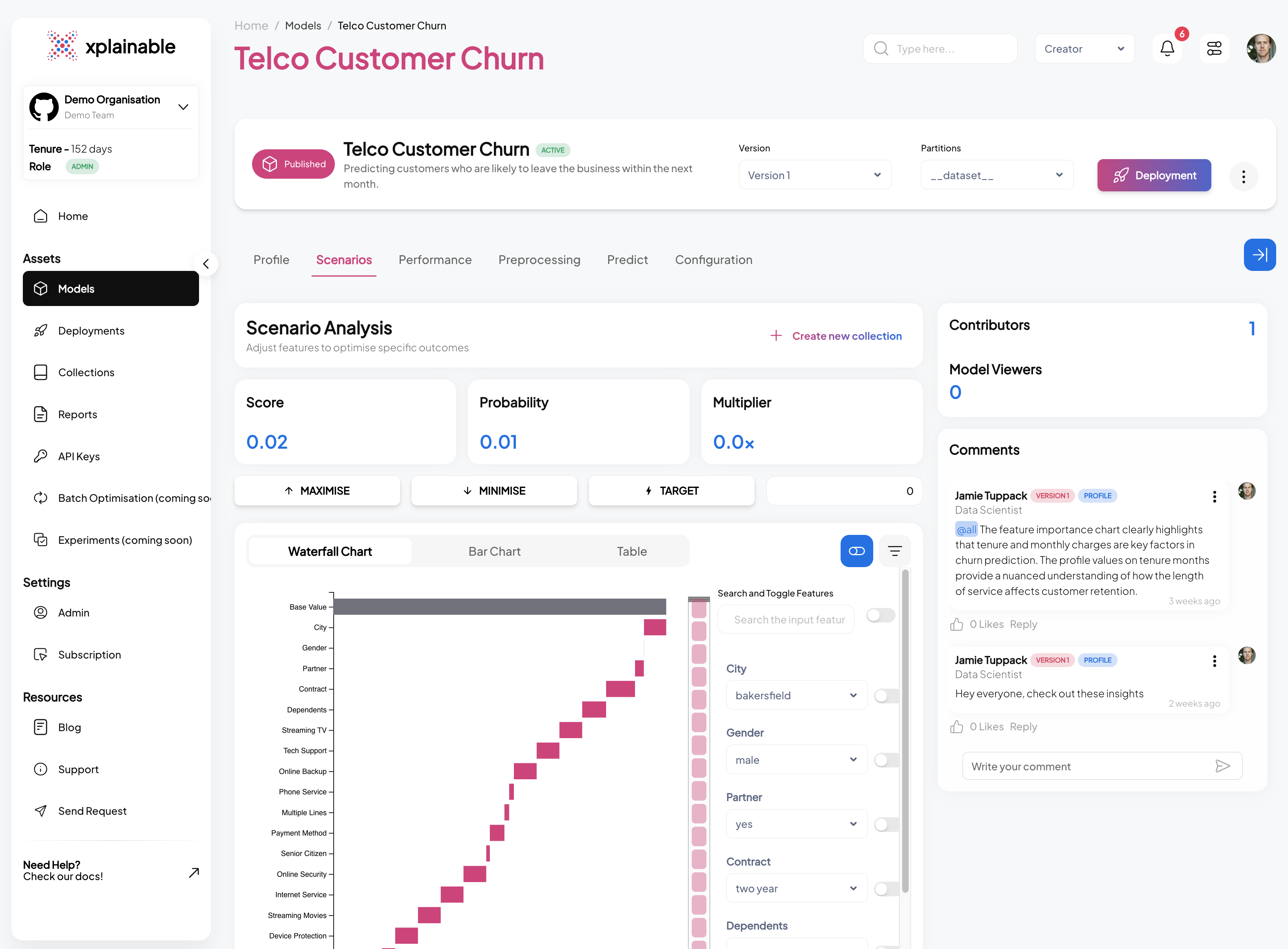

With xplainable, every prediction comes with a model profile showing exactly which features contributed to the score:

Your rep does not just see "47." They see why it is 47 and what would change it.

How This Differs from SHAP and LIME

Most "explainable AI" tools like SHAP or LIME bolt explanations onto existing black-box models. They are post-hoc approximations of what the model might be doing.

xplainable takes a different approach: the model itself is inherently interpretable. This means:

- No accuracy tradeoff: Glass-box architecture matches XGBoost and LightGBM performance

- Real-time explanations: No separate model-fitting step for each prediction

- Consistent explanations: No approximation variance between runs

Algorithms like Logistic Regression remain relevant in regulated industries where maximum transparency and explainability are required. xplainable delivers that transparency without sacrificing predictive power.

Scenario Analysis: The "What If" Question

xplainable scenario analysis lets reps ask: "What would move this lead from 47 to 75?"

"If they visit the pricing page and their company size increases to 500+, the score would reach 78."

Now your rep knows exactly what signals to watch for, or what qualifying questions to ask on the next call.

Practical Applications

For Sales Development:- Identify which qualifying questions unlock the highest score increases

- Prioritize follow-up sequences based on achievable score improvements

- Focus discovery calls on features that would move the needle

- Understand what hesitation signals are hurting the score

- Tailor demos to address the specific factors dragging down conversion probability

- Build proposals that directly address identified concerns

- Design nurture campaigns that target low-contribution features

- Identify which content pieces correlate with score improvements

- A/B test landing pages based on their impact on scoring factors

The ROI Case for Explainable Lead Scoring

Let us talk numbers. Companies implementing ML-based lead scoring report:

But here is the catch: these numbers assume adoption. A model that sits unused delivers zero ROI. Explainability is not a nice-to-have; it is the difference between a successful implementation and an expensive shelf ornament.

The Hidden Costs of Black-Box Scoring

Beyond missed conversions, opaque scoring creates downstream problems:

- Training overhead: New reps cannot learn the system because there is nothing to learn

- Debugging difficulty: When scores seem wrong, there is no way to diagnose why

- Stakeholder friction: Sales blames marketing for bad leads; marketing blames the model

- Compliance risk: Cannot demonstrate fair lending, hiring, or service practices

Building Your Model: A Practical Walkthrough

Step 1: Prepare your data

Pull historical lead data from your CRM with conversion outcomes. For reliable models, you need:

- At least 500-1,000 completed sales processes

- Both converted and non-converted leads

- Ideally 12-24 months of sales history

- Minimum 100 successful conversions

Include:

- Behavioral signals (page visits, downloads, email engagement, free trial activity)

- Firmographics (company size, industry, revenue, tech stack)

- Demographics (job title, seniority, department)

- Engagement recency (days since last activity, email open rates)

Step 2: Train with xplainable

pythonCopied!import xplainable as xp from xplainable.core.models import XClassifier from xplainable.core.optimisation import XParamOptimiser # Initialize and train model = XClassifier() model.fit(X_train, y_train) # Optimize hyperparameters using Bayesian optimization optimiser = XParamOptimiser(model, X_train, y_train) optimiser.optimise(metric='roc-auc') # View the model profile model.explain()

The model trains using a glass-box architecture. No post-hoc explanations bolted on afterward.

Step 3: Preprocess consistently

Production consistency requires standardized preprocessing:

pythonCopied!from xplainable.preprocessing import XPipeline pipeline = XPipeline([ ('clip_outliers', Clipper(lower=0.01, upper=0.99)), ('impute_missing', Imputer(strategy='median')), ('log_transform', LogTransformer(columns=['revenue', 'employees'])) ]) X_processed = pipeline.fit_transform(X_train)

Step 4: Deploy for real-time scoring

xplainable models run in sub-millisecond inference, so you can score leads the moment they hit your CRM. No batch jobs, no waiting.

pythonCopied!# Deploy to xplainable Cloud model.deploy(name='lead_scoring_v1') # Generate API key for CRM integration api_key = model.create_api_key()

Step 5: Surface explanations to your team

Use the model profile to push explanations directly into Salesforce, HubSpot, or your CRM of choice. Reps see the score and the reasoning in context.

Industry Benchmarks: Where Do You Stand?

Here is how to contextualize your lead scoring performance:

HR Tech sees 3-6% visitor-to-lead conversion because buyers arrive with strong demo intent. Cybersecurity averages 1-2% due to longer, risk-heavy evaluations with more stakeholders.

Your scoring model should account for these baseline differences. A 2% conversion rate might be excellent in cybersecurity SaaS and mediocre in HR Tech.

Why Sales Teams Actually Adopt This

The difference between "AI-assisted sales" and "sales teams ignoring AI" comes down to one thing: can the rep act on it?

Explainable lead scoring gives reps:

- Confidence: They understand why a lead is hot, so they prioritize accordingly

- Talking points: The model reveals what the lead cares about (pricing page visits, integration docs)

- Accountability: When scores are transparent, there is no hiding behind "the AI was wrong"

- Coaching opportunities: Managers can review decisions against documented reasoning

- Faster onboarding: New reps learn the qualification criteria from the model itself

The Adoption Test

Ask your sales team these questions:

- Can you explain why your top lead is scored higher than your second-best lead?

- What would need to change for a medium-priority lead to become high-priority?

- Which of your qualification criteria has the biggest impact on conversion?

If they cannot answer confidently, your scoring system has a trust problem.

Conclusion

Black-box lead scoring is a solved problem that nobody uses. Explainable lead scoring is the version your team will actually adopt.

With xplainable, you get:- Transparent predictions your reps can trust

- Scenario analysis for strategic outreach

- Real-time scoring with sub-millisecond inference

- Compliance-ready explainability for regulated industries

- Three-level explanations (global, regional, local) for different stakeholders

The data is clear: companies using behavioral scoring models achieve 39-40% MQL-to-SQL conversion rates, far above the 13% average. But only if the team trusts and uses the scores.

Ready to see how it works? and we will score your actual leads, with full explanations, in under 30 minutes.

Get started with xplainable

Build transparent ML models with real-time explanations and deploy in minutes.