How Xplainable meets all three regulators at once

Five overlapping regulatory deadlines are converging on Australian non-bank lenders, BNPL providers and Tier-2 insurers. Here's what's changing, why your current options fall short, and what audit-ready AI actually looks like

Australian non-bank lenders, BNPL providers and Tier-2 insurers are facing the most concentrated regulatory pressure the sector has seen in a decade. Five overlapping deadlines are converging — and the window to act is closing fast.

What just changedIn December 2024, the Privacy and Other Legislation Amendment Act 2024 passed the Australian Parliament. Buried inside it is a set of new obligations — APP 1.7, 1.8 and 1.9 — that require any organisation using automated decision-making to disclose how those systems work when they significantly affect a customer's rights or interests.

That means every credit decision, every hardship assessment, every claims outcome generated by a model is in scope.

The hard deadline: 10 December 2026. No grace period. No grandfathering of existing systems. If your model was built five years ago, it still needs to comply with decisions made from that date forward.

Penalties for non-compliance: up to $50M per breach, or 30% of adjusted turnover — whichever is higher.

The compliance calendar your peers are staring at- 1 Jul 2025 — APRA CPS 230 in force. Operational risk and third-party AI governance requirements live.

- 1 Jan 2026 — ASIC RG 271 IDR expansion. Written reasons for dispute outcomes now mandatory.

- 1 Jul 2026 — CPS 230 third-party contract uplift deadline. AI vendors must meet right-of-access, BCP, and incident notification requirements.

- 1 Oct 2026 — AFIA Code of Practice. Non-bank lenders and BNPL face industry code obligations on transparent automated decisions.

- 10 Dec 2026 — Privacy Act ADM duties commence. $50M per-breach penalties. Full stop.

This isn't one deadline. It's five, stacked across 18 months, each hitting a different regulator's desk.

The enforcement environment is already liveRegulators aren't waiting for December. The precedents are already on the books:

- $40M — Federal Court penalty against IAG/NRMA for an opaque algorithmic pricing decision

- $15.5M — NAB hardship penalty. ASIC is now also suing Resimac — the first non-bank lender ever sued for hardship failures, with a case management hearing in March 2026

- ACCC has issued penalty notices for non-transparent automated decision-making and signalled this is a precursor to heavier enforcement once ADM duties commence

Your CRO has read every one of these.

Why your current options won't get you thereMost institutions are weighing three paths. All three fail — just on different regulators' desks.

Option 1: Bolt SHAP or LIME onto your existing model Post-hoc. Not real-time. SHAP outputs aren't customer-readable — your operations team still rewrites reason codes by hand for every AFCA response. Fails RG 271 from January 2026.

Option 2: Keep running the internal Python notebook No material service provider designation. No documented BCP. No APRA right-of-access clause. No 24-hour incident notification SLA. That's 0 of 4 CPS 230 audit requirements met. Fails CPS 230 from July 2026.

Option 3: Commission a Big-4 consulting build A$400K–1.2M and six-plus months minimum. A January 2026 kickoff lands in production roughly three months after the December deadline. Fails the calendar, full stop.

Build in-house? A senior financial services data scientist costs A$200–300K per year. You can't hire your way out of a window that's already half expired.

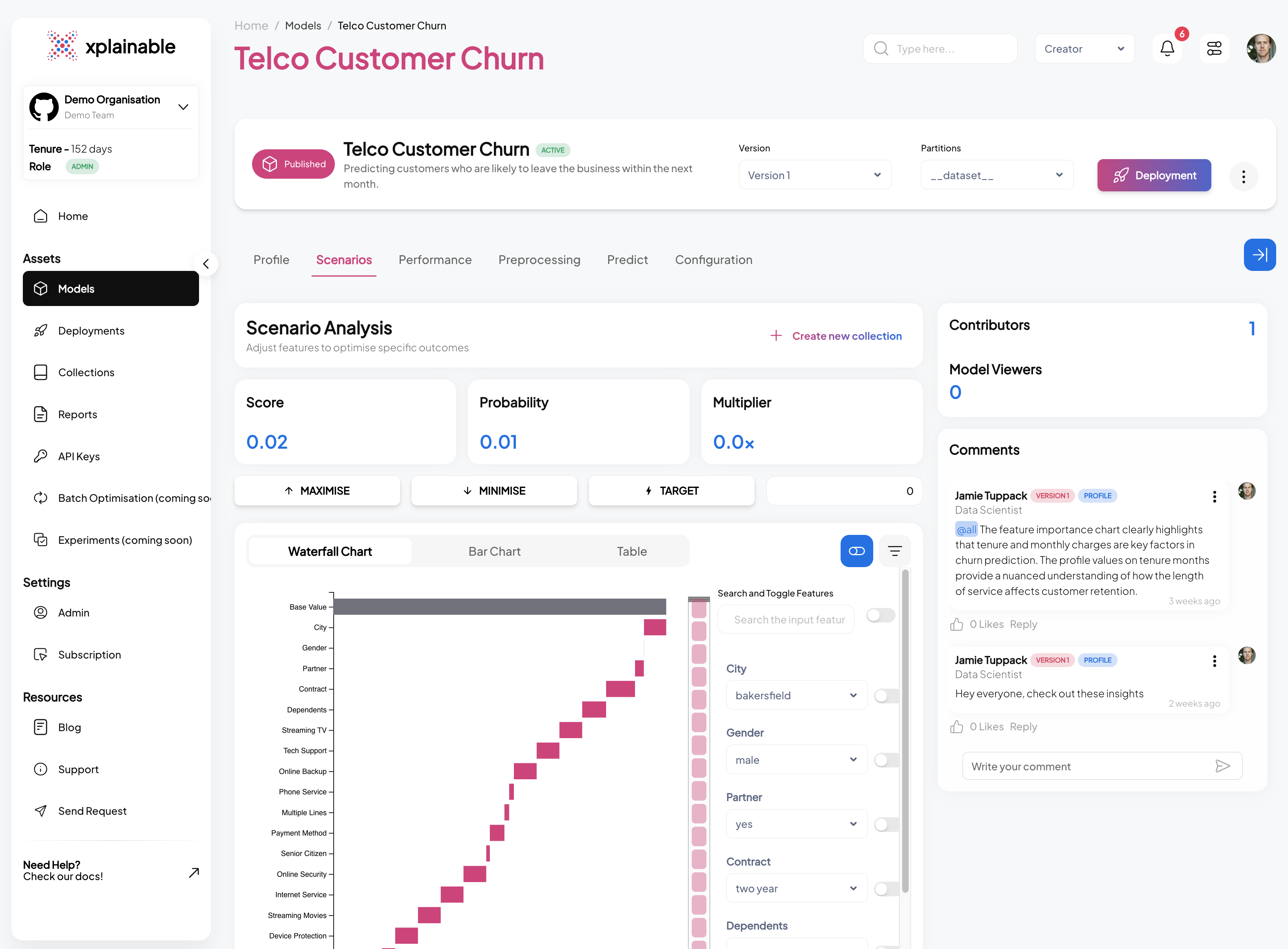

What audit-ready actually looks likeThe difference between compliant and non-compliant comes down to one architectural question: is explainability built in, or bolted on?

Post-hoc tools like SHAP explain decisions after the fact. They require a human to translate outputs into reason codes. They introduce a gap between what the model decided and what the explanation says — a gap a regulator or AFCA adjudicator will find.

Inherently explainable models are different. Every prediction ships with:

- The drivers behind it, in plain English — not raw feature weights

- A customer-readable reason mapped directly to RG 271 language, generated in one click

- A recommended action, dollar outcome, and confidence interval built for operations teams, not data scientists

- A full audit trail that satisfies APP 1.7–1.9, RG 271, and CPS 230 simultaneously

No translation layer. No manual rewriting. No gap for a regulator to find.

How Xplainable is differentXplainable is inherently explainable ML — built natively for Australian regulated finance, not retrofitted to it.

- Native explainability — per-feature attribution is baked into the model architecture. Every prediction shows its drivers without post-hoc processing.

- One-click reason codes — auto-generates plain-English AFCA-ready letters and decision notices directly from model output.

- Rapid refitting — update parameters weekly as economic conditions shift. No full retraining. No data science team required for routine recalibration.

- CPS 230 ready from day one — material service provider designation, documented BCP, sub-processor register, right-of-access clause, and 24-hour incident notification SLA. All four audit requirements met.

- Production-ready fast — deployable against your existing decisioning stack. The governance documentation is already written.

One architecture. Three regulators satisfied. APP 1.7–1.9 · ASIC RG 271 · APRA CPS 230.

The window is closing30+ Australian institutions are each writing a compliance remediation plan right now. The organisations that arrive at December 2026 audit-ready will be those that moved before the implementation market tightened.

There are roughly 210 days left. That's not enough time for a Big-4 build. It's not enough time to hire and onboard. It is enough time to deploy Xplainable.

30 minutes. Your data. A live answer.We'll prepare three explainable model outputs against a synthetic version of your collections cohort and walk you through the audit trail end-to-end. No NDA required for the first call.

Get started with xplainable

Build transparent ML models with real-time explanations and deploy in minutes.