The Importance of Explainability in Machine Learning Models

Emphasising the importance of understanding and interpreting the decisions made by our artificial counterparts.

As Artificial Intelligence (AI) improves and AI models continue to be deployed, Explainable Artificial Intelligence (XAI) - also known as Explainable Machine Learning (EML) or Interpretable Artificial Intelligence (IAI) - is becoming more important. XAI is a growing field within the field of Machine Learning (ML) because individuals, organisations, and governments are rightly asking the question, “Can we explain model predictions and how can we make better decisions”? In this blog post, we will explore 3 reasons why explainability is important in machine learning.

1. Decision-making

XAI tools play an important role in improving the accuracy of AI models and making more effective decisions. XAI tools provide insights into how machine learning models arrive at their predictions. By understanding the decision-making process of a machine learning model, we can make meaningful improvements to model accuracy and subsequently make more effective decisions. Given that we rely on machine learning models to make real decisions in business, science and society, XAI tools will continue to grow in importance for the end-to-end machine deployment pipeline of machine learning models.

There are two main ways XAI tools help improve accuracy. First, XAI tools can help identify when a machine learning model is relying on the wrong, incomplete or insufficient data. If this occurs, the model may be making incorrect assumptions when presented with new data, harming the quality of decisions that are made based on these predictions. Data scientists can use this insight to collect more or different data from which that model can be retrained. Second, XAI tools can help identify when a machine learning model is overfitting to the training data, which can lead to poor performance on new data. This insight can prompt machine learning engineers to tune the models they build. For example, an engineer might reduce decrease the number of features in the model or perform more data augmentation before training.

Accurate machine learning models can provide significant business value. They can help businesses make better decisions based on data-driven insights, which can lead to increased efficiency, cost savings, and revenue growth. For example, a financial institution can use accurate machine learning models to detect fraud more effectively, which can save the company millions of dollars. Similarly, an e-commerce company can use accurate machine learning models to predict customer behaviour and tailor their offerings accordingly, which can lead to increased sales and customer loyalty. By using XAI tools to improve the accuracy of their machine learning models, businesses can ensure that they are making informed decisions based on reliable data, which can ultimately lead to improved business outcomes.

2. Bias

One of the biggest issues with current machine learning systems is the potential for bias. Bias in machine learning systems can have significant negative impacts on individuals and specific groups. It is important to fight bias in machine learning systems and XAI tools can help produce equitable outcomes for everyone.

One of the most notable examples of bias in machine learning systems is COMPAS (Correctional Offender Management Profiling for Alternative Sanctions). COMPAS is used across the United States courts system to predict the likelihood that a defendant would become a recidivist. However in 2016, ProPublica published a groundbreaking article titled reporting that the model predicted twice as many false positives for recidivism for black offenders (45%) than white offenders (23%). This occurred as a result of bias in the underlying data that used to train the model. Because COMPAS is used to inform pretrial detention, trial, sentencing, and parole decisions, it had real negative effects on a black offenders. There are many other examples of biases in machine learning systems that have produced negative outcomes including and

The most significant cause of bias is in training data. The worst outcome is when bias is detected after it has had a negative impact on a specific person or group. XAI tools can help data scientists and machine learning engineers understand how outcomes are generated before models are deployed in order to target bias. For example, XAI tools can prompt data scientists to collect more diverse training data or help engineers tune more equitable models.

The risks of bias in machine learning models are significant and can be divided into two categories - direct and indirect. Direct risks include loss of revenue, damage to brand reputation, legal action, and regulatory fines. Indirect risks include loss of customer trust and potential long-term damage to business relationships. As highlighted by previous examples, bias machine learning models can lead to unintended consequences such as perpetuating systemic discrimination. Organisations that fail to address bias in their machine learning models not only risk their own financial and legal repercussions but also entrench larger societal issues. By implementing XAI tools, businesses can increase transparency and accountability, which can help mitigate these risks.

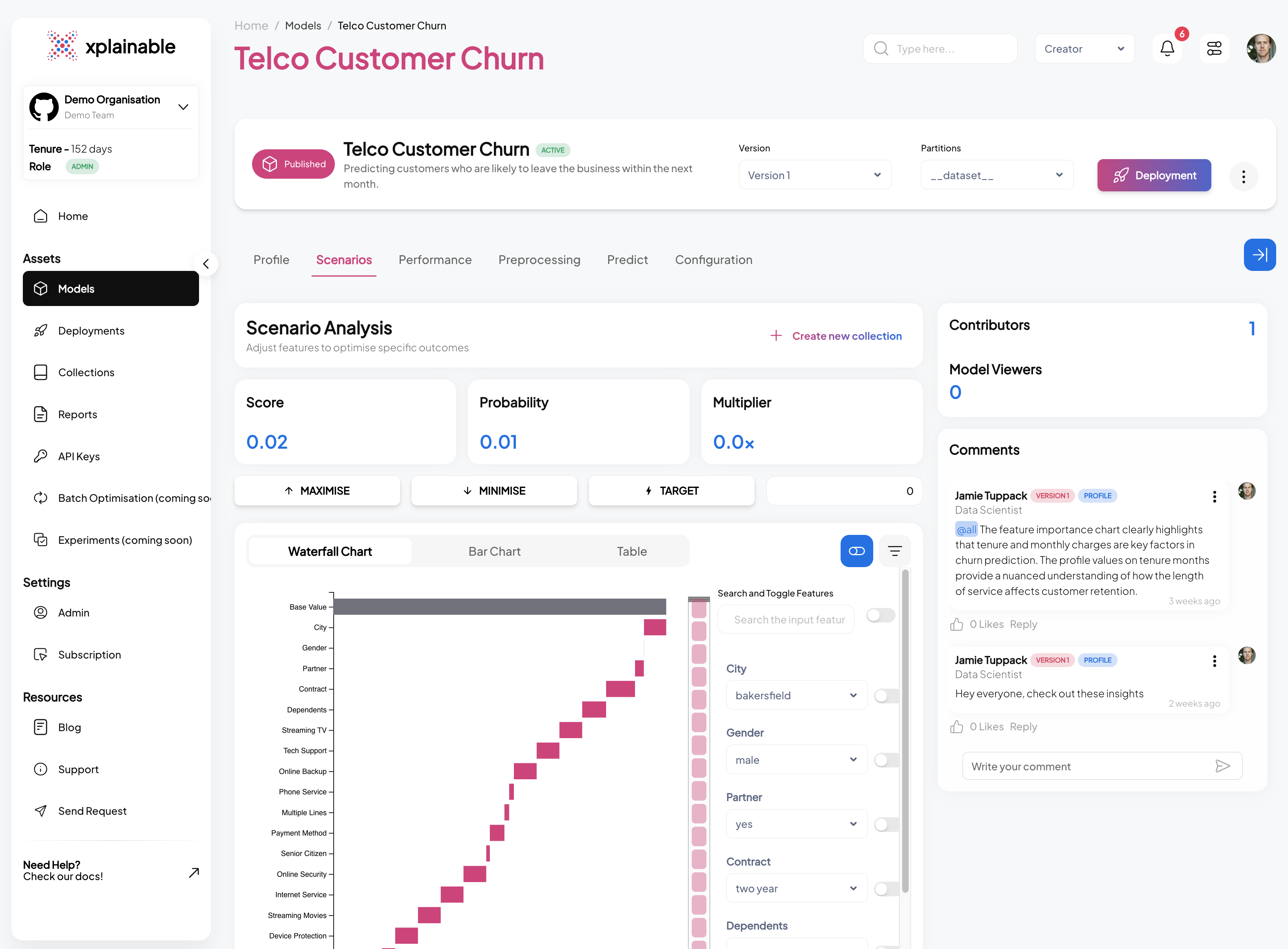

Xplainable's machine learning algorithms was built from the ground-up in order to support data scientists and engineers to identify the most important features of a machine learning algorithm efficiently and effectively.

3. Accountability

Machine learning models have the potential to make decisions that have far-reaching consequences for individuals, groups, and society as a whole. Therefore, it is essential to ensure that these models are designed and implemented in an ethical manner. Accountability and transparency in machine learning models are an opportunity to build trust or diminish it.

Accountability is a critical component of ethical AI and is closely linked to transparency. By making AI models transparent, we can also make them more accountable. Transparency is essential for ensuring that machine learning models are making decisions based on valid and relevant information. By providing transparency into the decision-making process of machine learning models, XAI can help ensure that these systems are making decisions that align with ethical and legal standards. Machine learning models are subject to various legal frameworks, such as data protection regulations. These frameworks often require that organisations take steps to ensure that their machine learning models are transparent and accountable. Legal standards are especially important in areas such as healthcare or criminal justice, where the decisions made by machine learning systems can have significant impacts on people's lives. Failure to comply with these legal requirements can result in significant legal consequences, such as fines or legal action.

Summary

In summary, XAI tools are a crucial part of the machine learning models workflow. XAI tools:

- help data scientists identify and mitigate biases in training data

- help organisations create accountability, transparency and trust

- help machine learning engineers improve the accuracy of models

- help organisations drive finely tuned action with explainable predictions

However, it is important to note that XAI is not a silver bullet solution for all problems related to machine learning. Rather, it is one tool that can be used to help improve the safety and fairness of machine learning systems.

Get started with xplainable

Build transparent ML models with real-time explanations and deploy in minutes.