The Importance Of Explainable Machine Learning

Driving Tangible Outcomes While Ensuring Ethical and Fair Use Of Technology

Machine learning (ML) models are prevalent in our daily lives and make decisions that have significant and lasting impacts on businesses and individuals. Over the last decade, as computing capability has developed, these decision-making machines have become highly complex, dominating their traditional ML predecessors in industry applications, all in the pursuit of predictive accuracy.

"Surely, if these models are more accurate, that's all that matters, right?" you might be thinking. Well, no. We rarely talk about the downside of using such complex models. Generally, the more complex the model, the more "black box" it is in nature. In this context, "black box" refers to the inability of a model to explain its predictions and decisions in a human-understandable way. Complex models inhibit human trust in outcomes and make taking action off the back of predictions difficult, even in critical business operations. Their complexity also masks potential underlying issues, such as bias and discrimination, that can go on to affect the rights of individuals and groups on an enormous scale.

Automated systems have limited effectiveness when people cannot understand the decision-making process of the ML models that drive them. There is a need to develop more intelligent, autonomous, and symbiotic systems to merge machines' highly predictive capability with humans' expertise. Machine learning models must be explainable and understood by human decision-makers to drive trust and tangible action and to mitigate business and societal risk.

What is Explainable Machine Learning?

"Explainability" refers to transparency in machine learning models' decision-making, specifically in a human-readable format. Explanations take form at the global, regional, and local levels that allow ML engineers, business domain experts, and end users to interrogate models at different levels:

- Global refers to identifying macro-level patterns in model decisions that can help identify population-level model behaviour. For example, "Is age or income a bigger contributor to loan default, and by how much?"

- Regional refers to model decision-making over a subset or cohort within a population. For example, "How does our model treat different age groups?"

- Local refers to explaining individual predictions at the most micro of levels. I.e. "What were the key drivers behind disapproving the applicant's loan?".

Having these questions answered enables us to grasp and validate decisions and outputs produced by machine learning algorithms, build trust in outcomes, and allow businesses to take a responsible approach to ML development by highlighting potential biases.

What Are The Advantages of Explainable Machine Learning?

McKiney's Quantum Black published an article in late 2022 that quotes:

"Our research finds that companies seeing the biggest bottom-line returns from AI—those that attribute at least 20 percent of EBIT to their use of AI—are more likely than others to follow best practices that enable explainability. Further, organizations that establish digital trust among consumers through practices such as making AI explainable are more likely to see their annual revenue and EBIT grow at rates of 10 percent or more."

The benefits of explainable machine learning are astronomical when applied correctly and can help businesses in several ways.

Insight Generation and Actionable Predictions for Business Value

Understanding how a model makes decisions can help drive quick and nuanced business optimisations. Businesses can achieve optimised outcomes by surfacing new, previously unknown insights (global level) and highlighting specific actions to optimise individual predictions (local level).

If a business can ascertain the underlying factors contributing to a prediction, it can understand how to influence its outcome.

For example, using asset health data, a resources company predicts that one of its trucks has a 54% chance of engine failure in the next two weeks. The prediction alone is helpful because the company can assign a technician to assess the truck. However, suppose you equip the same technician with the knowledge that a high operating temperature contributed to 18% of the prediction, while high soot production in the oil sampling contributed 14%. In that case, it may reduce the time and cost of resolving the issue as they have a targeted prognosis.

Faster Development Times

For data scientists, building models is a highly iterative process prolonged by the time associated with interrogating the model for over or under-fitting (where the model performs well on training data but poorly on real-world data). Explainability techniques can highlight these issues quickly, enabling faster overall development times and more productive teams.

Developing Trust and Ensuring Accountability

Transparent models can help build trust with users, stakeholders, and regulatory agencies by clearly demonstrating how they draw their conclusions. This detailed level of explainability allows data scientists to speak the same language as domain experts to ensure the alignment of objectives and outcome monitoring. When a non-technical domain expert can question the development of a model, it is also a more reliable mechanism to hold data scientists to account for the models they build.

Risk Mitigation & Compliance

Being able to highlight model decision-making processes allows developers to quickly detect and prevent biases that can creep into machine learning algorithms and identify gaps in data collection and quality. It also brings non-technical domain experts into the equation who may identify biases the developers missed that otherwise may have gone unnoticed.

For regulated industries, explainability techniques provide AI regulators with a mechanism to ensure regulatory compliance.

Businesses that adopt explainability in their machine learning and AI development will be the ones who build the most trust with regulators, consumers, and users within their organisation. They will also provide the fairest outcomes and a stable foundation for themselves in a world that is becoming increasingly regulated regarding artificially generated decisions.

We know that businesses prioritising these practices will grow faster and more profitable, and those that fail to do so risk being left behind in today's competitive market.

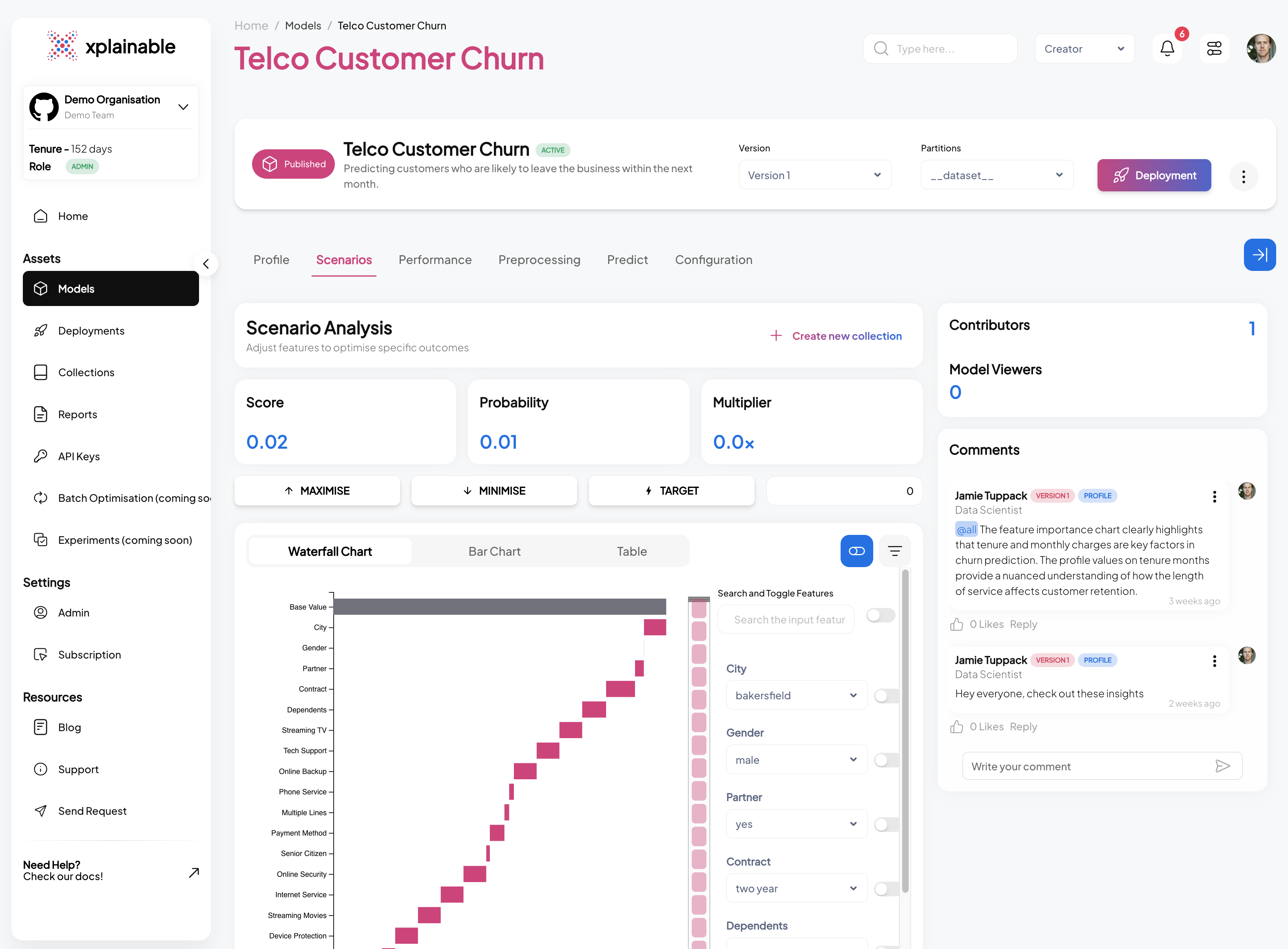

Get started with xplainable

Build transparent ML models with real-time explanations and deploy in minutes.