Xplainable + MCP

Becoming the Analytical Brain for LLMs

Xplainable + MCP: Becoming the Analytical Brain for LLMs

Large Language Models (LLMs) are remarkable at generating text, summarising content, and even reasoning in natural language. But there’s a problem: they are not grounded in analytical reality.

When it comes to decision-making, most businesses can’t rely on a system that simply predicts words based on prior training. They need auditable, explainable, and persistent models that provide a solid analytical backbone.

This is where Xplainable and the Model Context Protocol (MCP) come together.

What is Model Context Protocol (MCP)?

As the team at Model Context Protocol describe it:

“Think of MCP like a USB-C port for AI applications.”

MCP provides:

- A growing list of pre-built integrations your LLM can plug into directly

- A standardised way to build custom integrations for AI apps

- An open protocol that anyone can implement and use

- Flexibility to move between apps while keeping your context with you

In short, MCP acts as the bridge between an LLM and the external systems it needs to interact with.

The Current Problem: Black-Box Models

Machine learning models today are often black boxes. They might provide high accuracy, but:

- Their predictions are difficult (or impossible) to interpret.

- They can’t be easily audited.

- They don’t provide the level of transparency needed for business-critical decisions.

As a result, they struggle to gain trust outside of technical teams. And when these models are combined with LLMs, the problem compounds — hallucinations meet opacity.

At Xplainable, we believe in a simple principle:

What’s explainable to a human is interpretable to a machine.

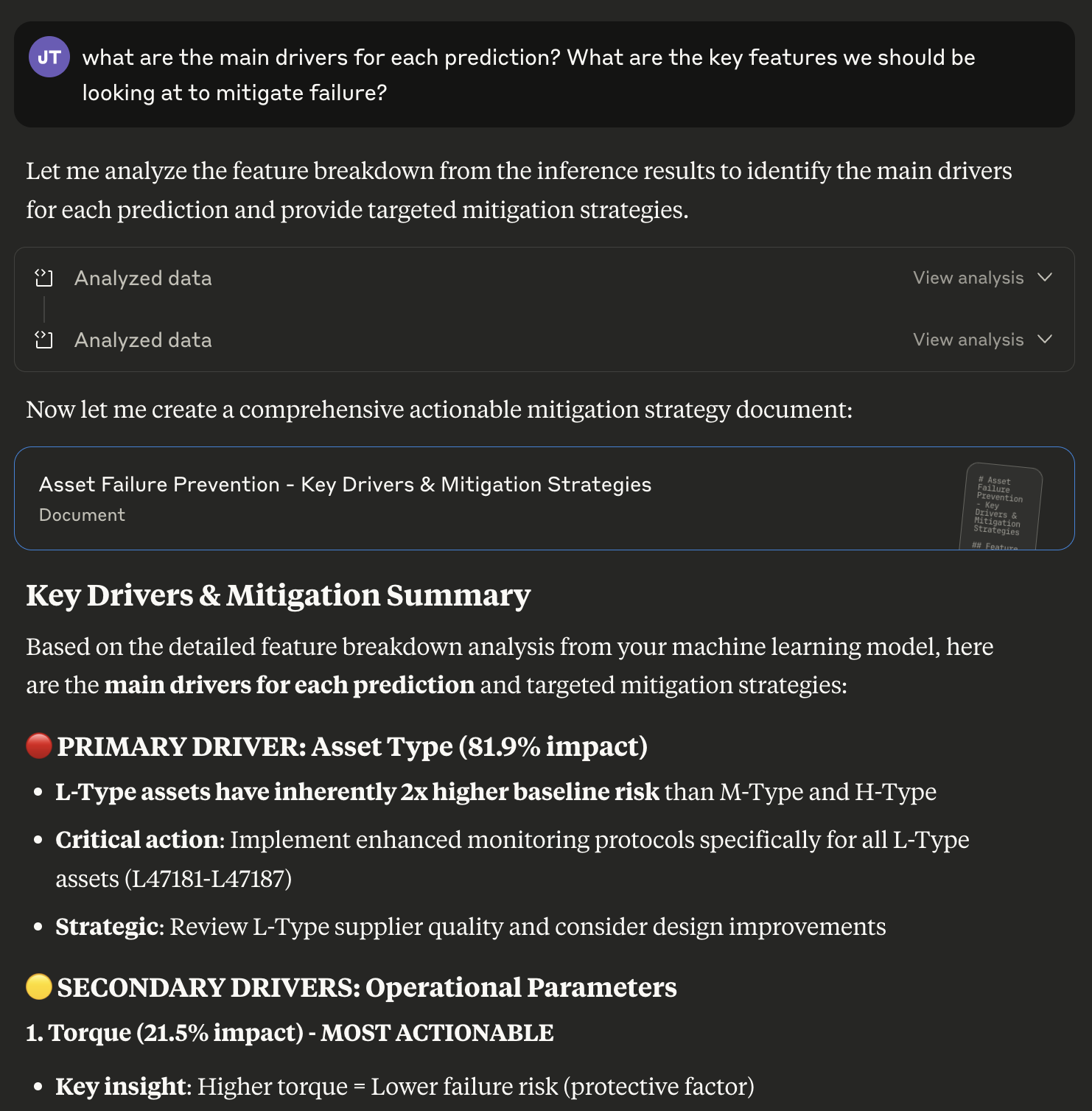

If humans can understand the reasoning behind a model’s prediction, then so can an LLM. And once an LLM can reliably interpret and explain model outputs, it can play a powerful role in decision intelligence.

Why Xplainable + MCP Matters

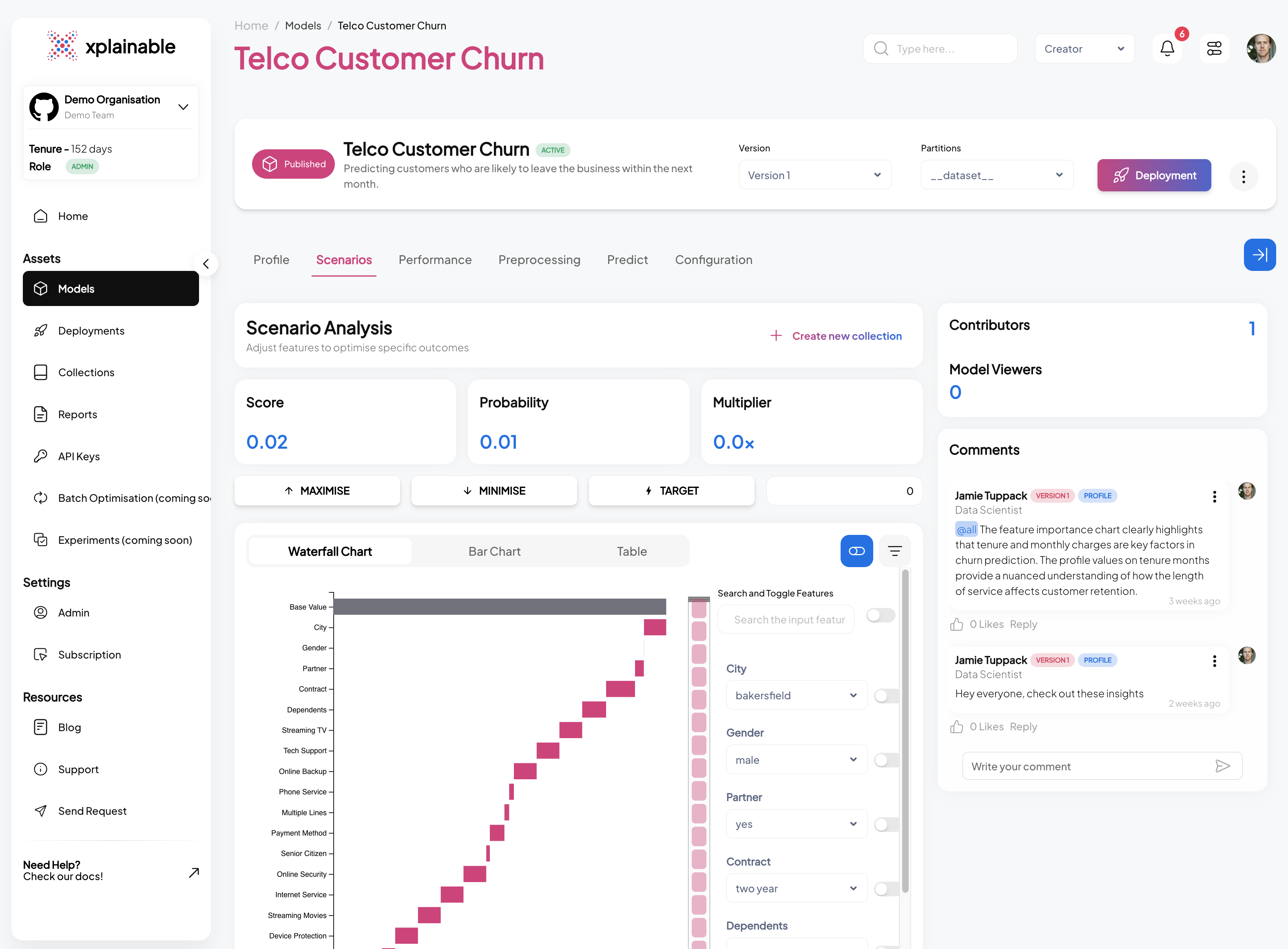

By integrating Xplainable with MCP, we give LLMs direct access to:

- Explainable models they can query in real time.

- Persistent deployments that provide continuity across days, weeks, or months.

- Optimisation tools that let them recommend the best course of action, not just describe what happened.

This changes the game for businesses using AI in decision-making.

Imagine an LLM that doesn’t just answer your questions with generic knowledge, but can actually query live, explainable models that are tailored to your data and workflows.

Practical Use Cases

Here’s what this looks like in the real world:

Finance“Which loans are most at risk this quarter?” The LLM queries a risk model deployed in Xplainable, returning not just a probability but a transparent breakdown of the key drivers.

“What’s the likelihood this patient will need follow-up care?” Instead of hallucinating an answer, the LLM calls a predictive model trained on real clinical outcomes, grounded in explainable factors that physicians can trust.

“If shipping costs rise by 10%, what’s the best way to minimise overall expense?” The LLM uses Xplainable’s scenario analysis and optimisation tools to simulate the impact and suggest actions — backed by a model that can be explained, audited, and adjusted.

Persistence Through Time

One of the biggest limitations of LLMs is their lack of memory across sessions. While fine-tuning and vector databases help, they don’t provide the long-term persistence needed for model-driven decision-making.

With Xplainable, MCP unlocks the ability to:

- Fetch predictions on a cadence (daily, weekly, etc.)

- Compare results over time

- Maintain a consistent analytical foundation that an LLM can build on

This persistence opens the door to business processes that simply aren’t possible with “stateless” LLMs.

Towards True Optimisation

LLMs on their own are descriptive at best. They can explain what happened, or generate options based on prior data.

But combine them with Xplainable models, and you move into prescriptive analytics:

“If this input changes, what action should I take to maximise efficiency or minimise cost?”

This means LLMs can go from narrators to strategic advisors — recommending actions that are explainable, auditable, and directly tied to outcomes.

Here’s how the flow works in practice:

The Road Ahead

This first MCP integration with Xplainable is just the beginning.

Over the coming weeks, we’ll expand it to cover the full lifecycle of models:

- Model Management ✅

- Deployment ✅

- Prediction ✅

- Training

- Explanation

- Optimisation

Our mission is simple but ambitious:

To become the analytical brain for LLMs.

This integration allows LLMs to move beyond surface-level answers and deliver decisions that are explainable, auditable, and persistent.

Try It Out

If you’d like to experiment with our MCP integration, you can find the full setup documentation on Github — or feel free to reach out to us directly.

Get started with xplainable

Build transparent ML models with real-time explanations and deploy in minutes.