Xplainable Blog The Robo-Debt Debacle

The Urgency of Explainable Machine Learning and Transparent Algorithms

The increased influence of artificial intelligence (AI) in our daily lives has sparked an urgent need for explainable machine learning. Explainability is centred around understanding and interpreting a model's decisions and predictions, which is vital for building trust, identifying and fixing errors, and guaranteeing fairness in AI-driven decisions.

A striking example that underscores the importance of explainability is the infamous Australian "robo-debt" scandal. In this debacle, an automated system was developed to identify and recover overpaid welfare payments. However, the oversimplified algorithm falsely accused numerous individuals of owing money. The system's glaring lack of transparency made it challenging for those falsely accused to dispute the claims, ultimately leading to a government inquiry and court case where many debts were waived.

The Shortcomings of the Robo-Debt System

The robo-debt system's flaws can be traced back to its failure to consider critical factors like income changes, data discrepancies, and other nuanced aspects affecting calculation accuracy. Had better feature engineering been implemented, the model could have been improved by incorporating essential elements such as:

- Human review: A "human in the loop" process could have caught errors or inaccuracies in the data, reducing false accusations.

- Income and employment history: Factoring in income and employment changes would have helped account for data discrepancies.

- Demographic information: Age, gender, and location data could have considered factors affecting individuals' repayment abilities.

- Financial hardship information: Medical expenses and other financial hardships could have been considered in repayment calculations.

- Communication history: Phone calls or emails could have provided better insight into individuals' situations.

The Ripple Effects of the Scandal

The robo-debt scandal had far-reaching consequences, not only for the individuals directly affected but also for the Australian government and public trust in automated systems. In 2020, the government announced an AUD 1.2 billion settlement for the affected individuals, marking one of the largest class-action settlements in Australian history. The scandal has also spurred discussions around the world about the ethical implications of AI-driven decision-making processes and the need for increased transparency in algorithmic systems.

The Importance of Transparency and Regulation

The robo-debt debacle emphasizes the need to prioritize explainability in algorithms and avoid "black box" models in high-stakes decision-making. ABC News created an engaging scrolly-telling example, detailing current machine learning methodologies and approaches to enhance transparency.

Regulation and governance play a critical role in ensuring AI and machine learning systems are transparent, accountable, and fair. While laws like GDPR, HIPAA, and CCPA exist, they don't specifically target AI and machine learning. As AI becomes more prevalent, technologies adhering to current and future regulations are essential.

If the robo-debt system had embraced "two-way transparency," individuals could have challenged accusations more effectively. Understanding the algorithm's inner workings would have allowed them to present evidence of errors or inaccuracies. This would empower individuals to contest accusations and provide evidence in their defence. Moreover, transparency would have allowed individuals, advocacy groups, and regulators to uncover systemic biases or errors in the algorithm, demanding rectification and monitoring system performance and compliance with laws and regulations.

Future Implications and Best Practices

The robo-debt scandal serves as a cautionary tale that underscores the importance of developing AI systems that prioritize explainability and transparency. To minimize the risk of similar incidents, organizations should consider the following best practices:

- Incorporate diverse perspectives: Include input from various stakeholders, including ethicists, social scientists, and end-users, to ensure AI systems are designed with fairness and transparency in mind.

- Implement rigorous testing and validation: Before deploying AI systems, conduct thorough testing to identify and address potential biases, errors, or inaccuracies.

- Establish monitoring and feedback mechanisms: Continuously monitor AI system performance and incorporate feedback loops to iteratively improve algorithms, ensuring they remain accurate and fair over time.

- Create clear communication channels: Ensure that end-users and affected individuals can easily access information about how AI-driven decisions are made, empowering them to contest decisions and provide evidence in their defence.

- Develop ethical guidelines and frameworks: Formulate comprehensive ethical guidelines and frameworks that govern the development, deployment, and use of AI systems, ensuring they align with societal values and legal requirements.

The robo-debt scandal highlights the urgent need for explainability in machine learning and algorithms to ensure fair, accurate, and unbiased decision-making. Two-way transparency is vital for achieving this goal, empowering both end-users and organizations to understand and enhance AI-driven systems.

By learning from the robo-debt scandal and implementing best practices, we can work towards a future where AI and machine learning technologies are developed and deployed responsibly. This will not only foster trust in AI systems but also harness their potential to improve lives and make a positive impact on society.

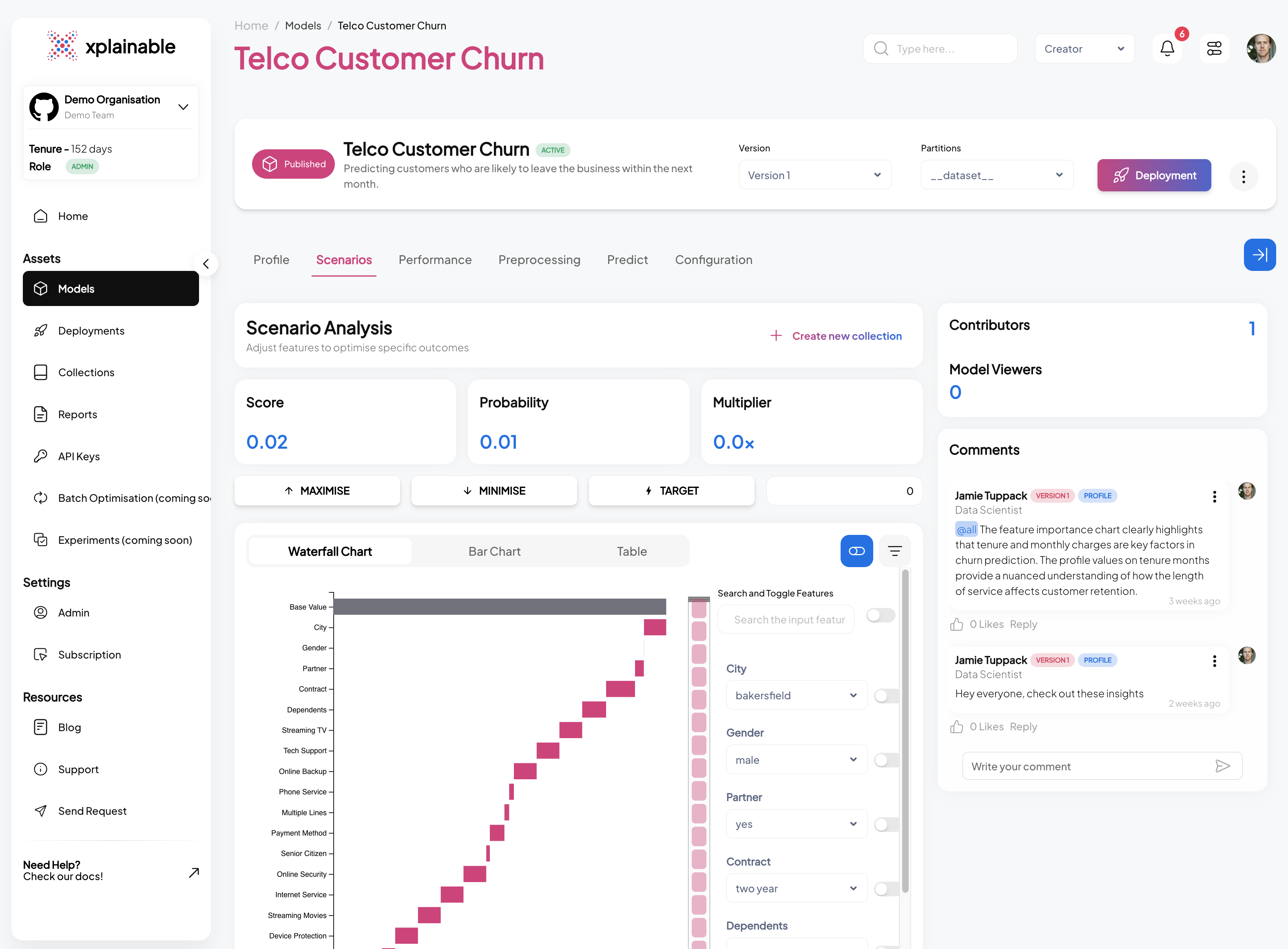

Get started with xplainable

Build transparent ML models with real-time explanations and deploy in minutes.